The migration from proprietary analytics platforms to open lakehouse architectures is no longer an emerging trend — it is the dominant strategic direction for enterprise data organizations. Gartner, Forrester, and IDC have all documented the accelerating shift away from monolithic, vendor-locked platforms toward composable, cloud-native data stacks built on Snowflake, Databricks, and their open-source foundations.

But what is driving this shift, and why is it accelerating now? The answer lies in the convergence of five powerful forces: economics, talent, technology, ecosystem, and regulation. Understanding these forces helps executives build a compelling business case for migration and time their investments for maximum impact.

1. Rising Licensing Costs and Inflexible Pricing

Proprietary analytics platforms like SAS, SPSS, and Informatica PowerCenter use seat-based or capacity-based licensing models that were designed for an era when computing was scarce and centralized. In that world, paying a premium for a tightly integrated analytics environment made sense. In the cloud era, these pricing models create increasingly painful misalignments.

SAS licensing typically costs $5,000 to $15,000 per user per year for Base SAS, with enterprise modules adding $20,000 to $50,000+ per server. These costs are fixed regardless of actual usage. An organization with 200 SAS seats may find that only 80 are actively used in any given month, but the contract covers all 200.

Cloud-native platforms, by contrast, use consumption-based pricing. Snowflake charges by the second of compute used. Databricks bills by the Databricks Unit (DBU), which scales linearly with actual workload. When a job is not running, you pay nothing. This model aligns cost with value in a way proprietary licensing cannot.

Organizations that migrate from SAS to Snowflake or Databricks typically report 40–60% reductions in total platform cost within the first year, even after accounting for migration expenses and cloud infrastructure.

MigryX migration methodology — Discover, Convert, Validate, Deploy

2. The Talent Gap: Proprietary Skills Are Shrinking

The workforce dynamics are stark. University programs have largely stopped teaching SAS as a primary language. Graduates enter the market fluent in Python, SQL, and R. The pipeline of new SAS programmers has been declining for years, while the existing SAS workforce is aging and retiring.

This creates a compounding problem. As SAS expertise becomes scarcer, the cost of hiring and retaining SAS programmers increases. Organizations that maintain large SAS estates find themselves in bidding wars for a shrinking talent pool, paying premium salaries for skills that are becoming obsolete.

Meanwhile, Python has become the most popular programming language in the world, with millions of developers and a vibrant ecosystem of training resources, community support, and open-source libraries. Hiring a Python data engineer is faster, cheaper, and more sustainable than hiring a SAS programmer.

Talent Market Snapshot

- Python developers available globally: Estimated 15+ million and growing

- SAS programmers available globally: Estimated 500,000 and declining

- University data science programs teaching SAS as primary language: Under 10%

- University data science programs teaching Python as primary language: Over 85%

MigryX Compass: From Chaos to Clarity

Every enterprise migration starts with the same challenge: understanding what you actually have. MigryX Compass scans your entire legacy estate — SAS programs, ETL jobs, stored procedures, macro libraries — and delivers a complete dependency graph, complexity score for every asset, and a recommended migration wave plan. What takes consulting teams weeks of manual inventory work, MigryX Compass accomplishes in hours.

3. Cloud-Native Architecture Advantages

The lakehouse architecture — whether implemented on Snowflake, Databricks, or a combination — offers structural advantages that proprietary platforms cannot match without fundamental re-architecture.

Elastic Scaling

SAS servers are typically sized for peak workload, which means they are over-provisioned most of the time. Month-end processing may require 10x the compute of a normal day, but the server runs 24/7 at that capacity. Cloud-native platforms scale up for peak and scale down (or to zero) immediately after. This elasticity alone drives significant cost savings.

Separation of Storage and Compute

In the lakehouse model, data lives in open formats (Parquet, Delta, Iceberg) on cloud object storage. Any compute engine can read it: Snowflake for SQL analytics, Databricks for ML workloads, dbt for transformation. This decoupling eliminates vendor lock-in at the data layer — the most critical layer of all.

Native Concurrency

SAS servers handle concurrent users poorly. When multiple analysts submit jobs simultaneously, they compete for the same CPU and memory, degrading everyone's experience. Snowflake's virtual warehouse model and Databricks' job clusters provide isolated compute for each workload, ensuring consistent performance regardless of how many users are active.

4. The Open-Source Ecosystem Is Accelerating

The open-source data ecosystem has reached a level of maturity, reliability, and enterprise-readiness that was unimaginable a decade ago. Apache Spark, which powers Databricks, processes more data daily than SAS ever has. Pandas, scikit-learn, and PyTorch are used by the world's most sophisticated data organizations, from quantitative hedge funds to pharmaceutical companies running clinical trials.

Critically, the open-source ecosystem is not just broader — it innovates faster. When a new machine learning technique is published, a Python implementation appears within weeks. SAS procedure updates happen on an annual release cycle. This velocity gap means that organizations on proprietary platforms are perpetually behind the state of the art.

The governance and quality concerns that once gave enterprises pause about open source have been addressed by commercial wrappers. Databricks provides enterprise security, lineage, and support around Spark. Snowflake offers managed infrastructure with SOC 2, HIPAA, and FedRAMP compliance. You get open-source innovation with enterprise-grade operations.

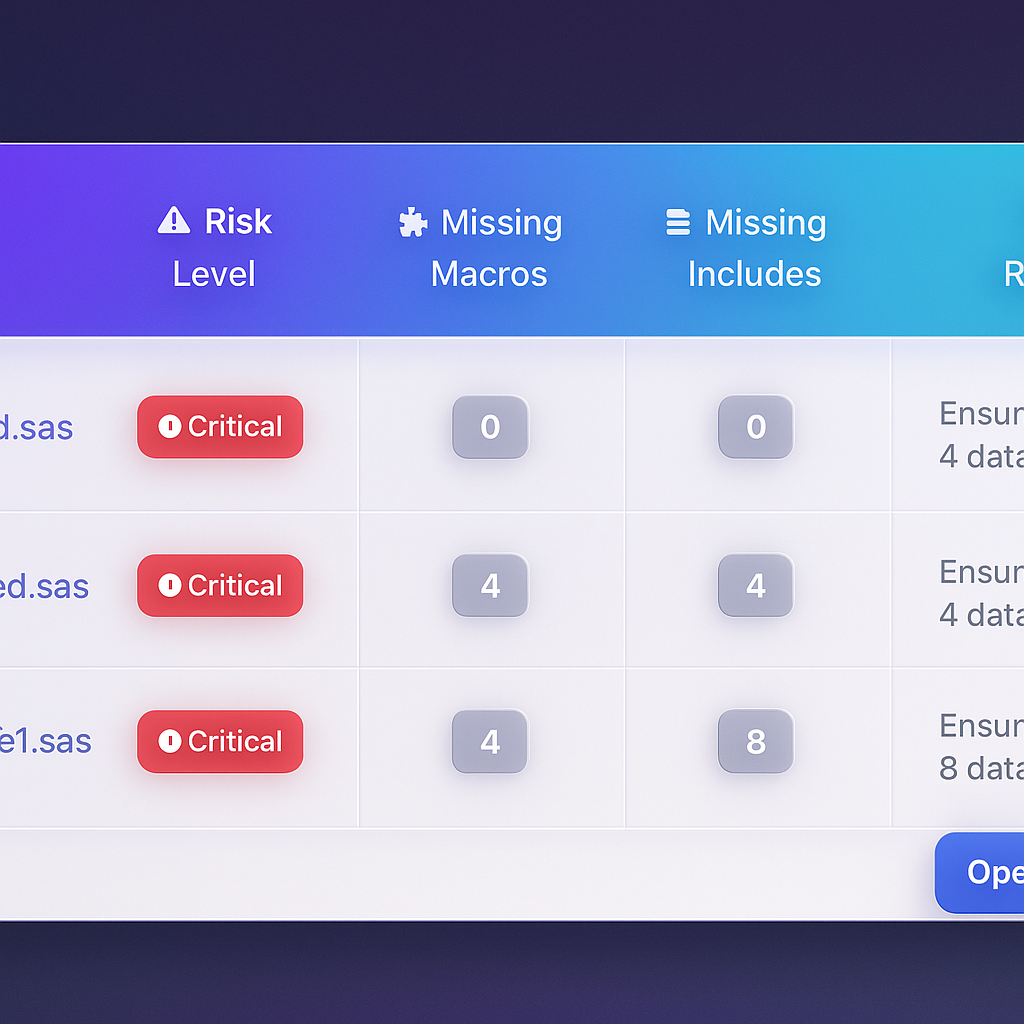

MigryX risk analysis identifies high-complexity programs and recommends optimal migration sequencing

Data-Driven Migration Planning with MigryX

MigryX does not just estimate complexity — it quantifies it. Every program receives a composite score based on lines of code, unique constructs, macro nesting depth, external dependencies, and data volume. Program managers use these scores to build realistic wave plans, allocate resources accurately, and set expectations with stakeholders based on data, not guesswork.

5. Regulatory and Audit Pressures Favor Transparency

Financial regulators, healthcare compliance officers, and internal audit teams are increasingly asking questions that proprietary platforms struggle to answer. Where exactly is my data? What transformations were applied? Can I reproduce this result exactly? Who had access to this dataset at the time of this report?

Open platforms built on version-controlled code (Python scripts in Git, SQL models in dbt) and immutable data formats (Delta Lake with time travel, Iceberg with snapshot isolation) provide auditable, reproducible pipelines by design. Every transformation is a Git commit. Every data state is a recoverable snapshot. Every access is logged.

SAS programs running on a shared server with opaque binary datasets offer none of these guarantees without extensive additional tooling. The gap between what regulators expect and what legacy platforms deliver is widening every year.

Proprietary vs. Open Platform: Capability Comparison

| Capability | Proprietary (SAS, SPSS, etc.) | Open Lakehouse (Snowflake, Databricks) |

|---|---|---|

| Pricing Model | Fixed seat/server licensing | Consumption-based, pay per second |

| Scaling | Vertical (buy bigger server) | Elastic horizontal, auto-scale |

| Talent Pool | Small and shrinking | Massive and growing (Python/SQL) |

| Data Format | Proprietary binary (SAS7BDAT) | Open (Parquet, Delta, Iceberg) |

| Version Control | Limited / add-on | Native Git integration |

| ML/AI Integration | Separate SAS Viya modules | Native (MLlib, MLflow, Snowpark ML) |

| Concurrency | Shared server, resource contention | Isolated compute per workload |

| Innovation Velocity | Annual release cycle | Continuous / community-driven |

| Audit / Lineage | Manual / third-party tools | Built-in (Unity Catalog, Access History) |

| Cloud Multi-Region | Complex / not native | Native multi-region, multi-cloud |

| Real-Time Streaming | Not native | Structured Streaming, Snowpipe |

The Tipping Point Is Here

Each of these five trends individually justifies serious consideration of migration. Together, they create a tipping point. Organizations that delay migration face compounding costs: escalating licensing fees, increasing difficulty hiring, growing technical debt, and widening capability gaps relative to competitors who have already moved.

The organizations moving fastest are not waiting for their SAS contracts to expire. They are using automated migration tools to accelerate the transition, running parallel environments during the cutover period, and reinvesting the licensing savings into cloud platform capacity and team upskilling.

What Successful Migration Looks Like

The organizations that navigate this transition well share common characteristics:

- Executive sponsorship: The CFO and CTO align on the financial and technical case simultaneously.

- Automated conversion: Tools like MigryX translate 70–85% of code automatically, reducing the timeline from years to months.

- Phased approach: Migrate in waves, proving value incrementally rather than attempting a big-bang cutover.

- Talent investment: Budget for training existing SAS analysts in Python and SQL. Most experienced analysts can become productive in the new stack within 8–12 weeks.

- Validation rigor: Run parallel environments and compare outputs systematically before decommissioning legacy workloads.

The question is no longer whether to migrate from proprietary analytics to the lakehouse. The question is how fast you can execute the migration without disrupting the business. That is an engineering problem with known solutions.

The trends are clear, the tooling is mature, and the early movers have proven the path. The cost of inaction now exceeds the cost of migration. For data leaders still evaluating, the time to act is now.

Why MigryX Is the Foundation of Every Successful Migration

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Automated discovery: MigryX Compass scans thousands of programs and produces a complete inventory with dependency mapping in hours.

- Complexity scoring: Every asset is scored by code complexity, data volume, and business criticality — enabling precise effort estimation.

- Wave planning: MigryX recommends optimal migration waves based on dependencies, ensuring no pipeline breaks mid-migration.

- 4-8x faster delivery: Enterprises using MigryX consistently report migration timelines compressed from years to months.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to modernize your legacy code?

See how MigryX automates migration with precision, speed, and trust.

Schedule a Demo